We Enable Machines to Listen Like Humans with Speech Cognitive Services

Our Clients

Our Expertise in Speech Recognition Services

What are Cognitive Services

Cognitive Services are collection of machine learning algorithms hosted over cloud that solve problems related to Artificial Intelligence. One of the most exciting parts of cognitive services is Speech recognition. Popular services provide APIs enabling us to add speech recognition capabilities to our application. This simply converts voice/audio into written text that aids quick understanding of content.

Speech recognition services have a plethora of professional and casual uses. Some of the use cases includes: voice control over apps, devices and accessories, transcriptions of meeting notes and conference calls in real-time and automated classification of phone calls.

Speech to Text and Machine Learning

POPULAR SERVICES:

- IBM Watson

- Amazon Transcribe

- Twilio

- Google Speech API

- Azure Cognitive services – Speech to Text for Microsoft

- API.AI

- Speechmatics

- Vocapia Speech to Text API

Watson Cognitive Services

Better Accuracy

Generates accurate transcriptions by applying grammar, language structure and composition guidelines to audio signals.

Speaker Identification

IBM Watson Text to Speech API is Capable of identifying and registering more than one speaker with accuracy and confidence.

Custom Model support

For improved accuracy the API can be customized for the preferred language and content such as names of individuals, sensitive subjects or product names.

Real-time conversation

IBM Watson Speech to Text provides meaningful analytics by transcribing and analyzing audio from a microphone in real-time to pre-recorded files.

support for multiple languages

The IBM Watson Speech to Text Service with its speech recognition capabilities automatically transcribes Arabic, English, Spanish, French, Brazilian Portuguese, Japanese, and Mandarin speech into text.

multiple audio formats supported

Identifies and transcribes discussions with precision, even if the audio quality is low. Supports multiple audio formats (.mp3, .mpeg, .wav, .flac, or .opus) and programming interfaces (HTTP REST, Asynchronous HTTP, Websocket)

context and custom words support

Watson Natural Language Understanding identifies and analyzes text to drive meta-data from content such as keywords, concepts, categories, entities, semantic roles and relations.

For more personalized services, following three Watson Cognitive Services API’s can be used:

IBM WATSON PERSONALITY INSIGHTS

Predicts the needs, values and personality characteristics of an individual, by extracting information from their digital communications, social media and written text.

ibm watson tone analyzer

Detects three types of language tones, using linguistic analysis from text: social tendencies, emotional state and language style.

ibm watson emotion analysis

A fraction of the Alchemy Language API, is useful in measuring the emotions of an individual by analysing his or her writing.

azure cognitive services

real-time conversation

Azure Cognitive Services can be customized to turn on and recognize audio coming from a microphone or any other real-time audio source, and even audio from within a file.

multiple language support

Azure Speech to Text recognizes and transcribes audio in a number of languages in interactive and dictation modes.

multi-mode conversation & dictation

Azure Custom Speech Service supports three modes of recognition: dictation, conversation and interactive. Its recognition mode adjusts speech recognition based on how the users are likely to speak. Depending on their need, users can select the appropriate recognition mode.

bing cognitive services

Azure Cognitive Services, with a single API call enables users to search carefully and systematically billions of images, videos, webpages and news.

google cloud speech

Wide Array of Languages Supported

Google Cloud Speech API is supportive of a global user base as the API is capable of recognizing over 110 languages and variants.

Real-time Conversation Support using gRPC

Multiple Audio Formats Supported

Google speech to text transcribes audio input from pre-recorded to real-times sources and supports multiple audio encodings such as FLAC, PCMU, Linear-16 and AMR.

Google Speech

Easy to use, Google Cloud Speech API applies powerful neural network models to convert audio to text. Google Cloud Speech API facilitates integration of Google speech recognition into developer applications. Developers can send audio and receive transcription in text from the Google Cloud Speech API service.

Good Accuracy Google speech to text uses advanced neural network algorithms for speech recognition. Users should expect enhanced accuracy as Google Speech Recognition technology advances. Developers can also benefit from Google Natural Language Processing that carries out entity analysis, sentiment analysis, syntax analysis and content classification.

Our Findings

noisy background or bad audio quality

For improved Accuracy of the results, the quality of the environment has to be monitored such as the placement of the recording device, phone for in-calls and acoustics of the room. The API is sensitive to noisy background and bad quality audio.

speech overlap during conversation

With people speaking at the same time, it becomes difficult to recognize and transcribe speech.

context of conversation

The API transcribes audio as it recognizes it, causing spoken words to lose context.

accent support

Limited capabilities in drawing classification of non-native speakers.

conversesmartly by folio3

With the development of web application ConverseSmartly (CS), Folio3 has established a strong footprint in the use and application of Machine Learning, Artificial Intelligence and Natural Language Processing.

CS enables organizations and individuals to work smarter, faster and with greater accuracy. The application can be used to analyze dialogue or speech from team meetings, interviews, conferences and seminars and even lectures into text.

Some of our customers success stories

Conversations that Matter

Web application to make your conversations smarter, intelligent and more productive with the use of Machine

Learning, Artificial Intelligence and NLP technological concepts.

Standard Chartered Bank

A suite of innovative productivity apps to make employees happier.

HipLink

Secure, wireless alert management system used by Fortune 500 companies, governments, healthcare providers and

emergency services.

Bitzer (Acquired by Oracle)

A secure, multi-platform (Blackberry, iOS, Android) native mobile app for accessing enterprise data.

Kabuto

Developed iOS and Android apps for the Kabuto collaboration platform.

PACP Oman

An award winning, Sencha based cross platform app for the Public Authority for Consumer Protection.

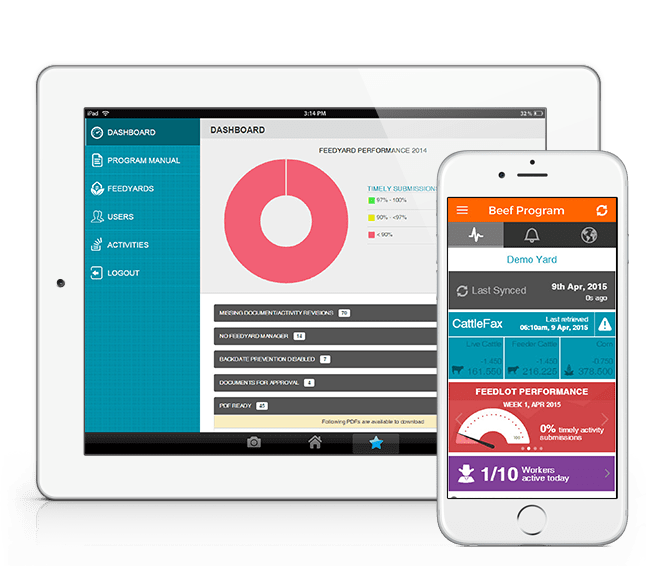

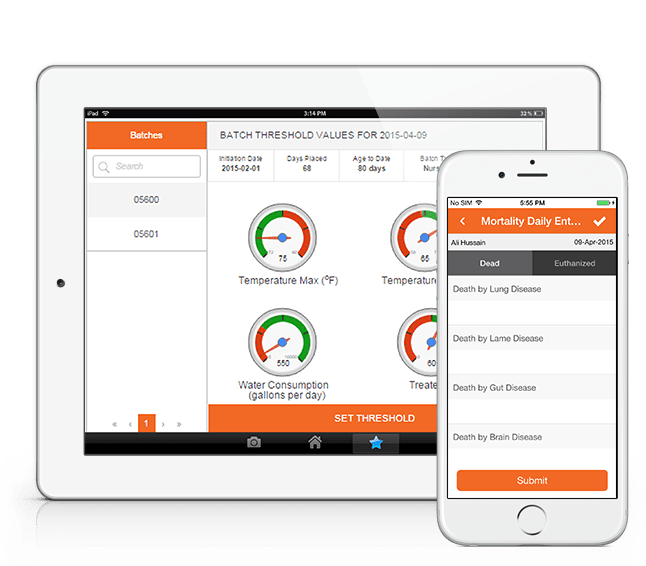

Beef Program App

A Sencha-based solution for the quality management of animal feed yards in the bovine industry. The app facilitates the management of the feed yard’s day to day quality assurance activities, SOPs & maintenance.

Pig Program App

A Sencha-based quality management solution for pig farms that facilitates the monitoring and management of healthy pigs along with providing reporting features for the animals’ veterinary diagnosis and drug administration.

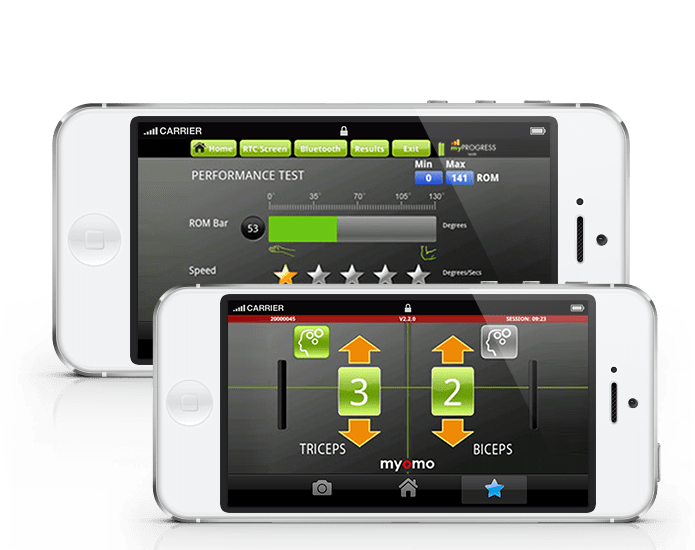

Myomo

Developed two cutting-edge Android apps for easy management of the mPower 1000 – Robotic arm.

Customer Support App

A one on one customer support app for the iPhone that connects consumers to businesses and enables consumers to provide their feedback and report issues/complaints directly to businesses via unstructured, instant messages and get follow-ups in the same manner.

Technologies We Love

WHAT CLIENTS SAY ABOUT US

We were extraordinarily pleased with the functionality and depth of understanding that Folio3's solution exhibited after a relatively brief but incisive, project kickoff meeting. Folio3 "gets it" from the start, relieving us from tedious development discussions drawn out over a long period of time.

Anne Thys

VP Logistics, Sundia Corporation

Folio3 has developed our award winning cross platform app on the Sencha Touch framework and we are very happy with the implementation and the capabilities of the product.

Idrees Shah

Project Consultant, Public Authority for Consumer Protection, Government of Oman

Twinstrata has partnered with Folio3 for several years since the very early days of our company. We have been able to offload a significant portion of our development effort to their team. They have been reliable and responsive to our needs.

Mark Aldred

Director, Product Development, TwinStrata

The Folio3 team has consistently exceeded our expectations. It felt as if we were working with an onshore team. It was their ability to understand our needs and keep us engaged throughout the entire process that has resulted in an exceptional product and a valued partner

Johnny McGuire

Product Manager, TRUETRAC

They have helped us manage and execute the bulk of the engineering work necessary for integrating with our partners in the Airline, Car and Hotel verticals.

Stewart Kelly

Founder & CTO, Sidestep

Whether it's a new development, update or maintenance - Folio3 always shines through. Their turnaround time is always stellar, it's a pleasure to work with them.

Mike Do

Software Engineer, Barnes & Noble

Folio3 nails it again and again. Their development & QA work is absolutely flawless, couldn't have asked for a better technology partner.

Thais Forneret

Back Office Manager, Maestro Conference

Having reliable, high quality product development, QA and marketing support resources gives us more bang for the buck and enables much shorter development timeframes than a US only operation.

Tony Lapine

CEO, HipLink Software

The Folio3 team did an amazing job. They really look out for the customer and try and do the best for them. Very impressed with the final product they delivered. I really enjoyed working with their team and would highly recommend them.

Sarah Schumacher

Progressive Beef Program Manager at Zoetis

Let's Talk About Your Project

Call

Usa

408 365 4638

VISIT

1301 Shoreway Road, Suite 160, Belmont, CA 94002